Real World Application Performance

The sets of single-points have traditionally been used as the prime metric for assessing the performance of the systemâs interconnect fabric. However, this metric is typically not sufficient to determine the performance of real-world applications. Real-world applications use a variety of message sizes and a diverse mixture of communication patterns. Moreover, the interconnect architecture becomes a key factor and greatly influences the overall performance and efficiency.

Mellanoxâs adapter architecture is based on a full offload approach with RDMA capabilities, reducing the traditional protocol overhead from the CPU and increasing processor efficiency. QLogicâs architecture is based on an on-load approach, where the CPU needs to deal with the transport layer, error handling etc., and therefore increases the overhead on the CPU and reduces processor efficiency, leaving less cycles for useful application processing.

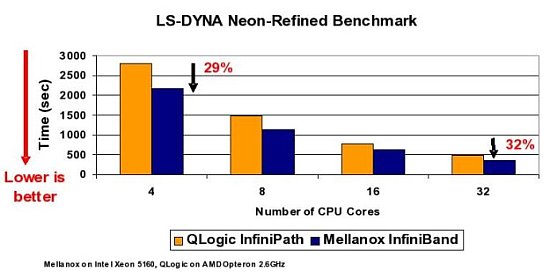

The following chart compares Mellanox InfiniBand and QLogic InfiniPath interconnects using LD-DYNA Neon-Refined benchmark (frontal crash with initial speed at 31.5 miles/hour, model size 535k elements, simulation length: 150ms - model created by National Crash Analysis Center (NCAC) at George Washington University).

Using a best case hardware scenario, Mellanox InfiniBand shows higher performance and better scaling compared to QLogic InfiniPath (Note the improvement from 4 cores to 32 cores). Livermore Software Technology Corporationâs (LSTC) LS-DYNA (general purpose transient dynamic finite element program capable of simulating complex real world problems) is a latency-sensitive application. While QLogic shows lower latency, as a single-point of performance, Mellanoxâs architecture delivers higher system performance, efficiency and scalability.

It is difficult, and sometimes misleading, to predict real-time application performance with just single-points of data. In order to determine the systemâs performance and the interconnect of choice, one should take into consideration a set of metrics including the single-point of performance (bandwidth, latency etc.), architecture characteristics (CPU utilization, overlap capabilities of computations and communications scalability etc.), applications results, field proven experience and hardware reliability.

In order to provide better applications sight, Mellanox has created the Mellanox Cluster Center. The Mellanox Cluster Center offers an environment for developing, testing, benchmarking and optimizing products based on InfiniBand technology. The center, located in Santa Clara, California, provides on-site technical support and enables secure sessions onsite or remotely. More details can be achieved through Mellanox web site.

The author would like to thank Sagi Rotem, Gil Bloch and Brian Sparks for their input during reviews of this article.

Note: You can download a pdf version of this article.

Another article, Cluster Interconnects: Real Application Performance and Beyond and Optimum Connectivity in the Multi-core Environment by Gilad, are also available.

Gilad Shainer is a senior technical marketing manager at Mellanox technologies focusing on high performance computing. He joined Mellanox Technologies in 2001 to develop Mellanox's InfiniHost PCI-X Host Channel Adapter (HCA) device and later led the development of Mellanox's InfiniHost III Ex PCI Express HCA device. Gilad Shainer holds MSc. degree (2001, Cum Laude) and a BSc. degree (1998, Cum Laude) in Electrical Engineering from the Technion Institute of Technology in Israel. He is also a member of the PCISIG PCI-X and PCI Express Working Groups and has contributed to the definition of the PCI-X 2.0 specifications.