Of course it all depends, but deciding to use the Cloud for HPC is not as simple as it may seem

Computing in the "Cloud" allows computing to be purchased as a service (like electricity) and not as a product (like a generator). Made possible by operating system (OS) virtualization and the Internet, Cloud computing allows almost any server environment to be replicated (and scaled) instantly. Many web service companies find Cloud computing more economical than purchasing (or co-locating) hardware because they can pay for computing services only when needed.

The definition of a "Computing Cloud" can vary depending on the customer and vendor. The definition from Wikipedia is as follows:

Cloud computing is the delivery of computing as a service rather than a product, whereby shared resources, software, and information are provided to computers and other devices as a metered service over a network (typically the Internet).

The ability to rapidly construct and meter needed computing services is what makes the Cloud model successful for both providers and customers. Grid computing made the same promise years ago, but had issues with the rapid delivery of services. Most grid systems offered a low level library compatibility to end users rather than the machine level compatibility of Clouds. Offering full OS virtualization ensured full compatibility for users and eliminated the library mis-match issues that often occurred in Grid systems.

HPC In A Cloud

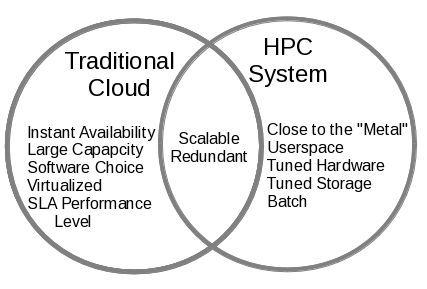

The advantages of Cloud computing are certainly attractive to HPC users. Indeed, in many cases, users cannot get enough cycles on existing systems and Cloud HPC would be a viable economic alternative to purchasing more hardware. At first glance, Clouds would seem to be a welcome addition to the HPC toolbox, however, on close inspection the traditional Clouds do not (or cannot) offer many important aspects of HPC computing. To illustrate the lack of overlap, consider the following diagram that lists the desirable aspects of a traditional Cloud and those of an HPC System (e.g. a typical cluster). The only shared features are scalability and reliability. The other aspects are orthogonal in nature and represent a serious mismatch between the two approaches.

Taking A Deeper Look

A "Traditional Cloud" offers features that are attractive to web service organizations. Most of these services are single loosely coupled instances (an instance of an OS running in a virtual environment). There are service level agreements (SLA) that provide the end user with guaranteed levels of service. The features that are attractive to end users, as shown in the figure above are as follows:- Instant Availability - Cloud offers almost instant availability of resources. The amount of "computing" can be increased or decreased quickly.

- Large Capacity - Users can instantly scale the number of applications within the Cloud. There is often no waiting for resources.

- Software Choice - Users can design their "instances" to suit their needs. There are few software restrictions in the virtual environment.

- Virtualized - Instances can be easily moved to and from similar Clouds

- Service Level Performance - users are guaranteed a certain minimal level of performance.

Contrast these features with those that are attractive to most HPC users:

- Close to the "Metal" - there has been many man-years invested in optimizing HPC libraries and applications to work closely with the hardware thus requiring specific OS drivers and hardware support.

- Userspace Communication - In HPC user applications often need to bypass the OS kernel and communicate directly with remote user processes, however, this feature is not supported in a Cloud environment.

- Tuned Hardware - HPC hardware is often selected based on communication, memory, and processor speed for a given application set.

- Tuned Storage - HPC storage is often designed for a specific application set and user base.

- Batch Scheduling - All HPC systems use a batch scheduler to share limited resources. User jobs must wait until resources become available and resources are not managed by the user.

One shared aspect is resource scalability. That is, the ability of the user to increase the compute resources quickly. Since most Cloud applications are sequential single process jobs, scalability is easily accomplished with adding additional virtual machines. In the case of HPC, scalability is usually referred to as an application property that determines how many cores (processors) can be applied to the problem before performance levels off. It can also represent the number of users jobs that can run on an HPC cluster. In essence, in both Clouds and HPC clusters users can scale up to the amount of computing they require. There is a big difference, however, in how the scalability is managed. In a Cloud, more resources are created by adding more virtual machines (OS instances). In a cluster, the resource scheduler provides the physical resources for the users application. Due to their large shared nature, Clouds often have more raw compute capacity than many large clusters.

Another shared aspect is redundancy though hardware independence. That is, the user, for the most part, does not care (or control) on which exact hardware their applications run. Thus, both Clouds and clusters can schedule around broken or failed hardware.

Perhaps the biggest mismatch is in the performance area. HPC applications strive to maximize performance on particular hardware. Clouds only guarantee "minimal" level of performance in terms of compute and I/O capability. Thus, if your maximum requirements are near the Cloud minimum, then cloud computing may be a solution. Otherwise, the performance you were expecting may not be possible or delivered on a consistent basis.

The Skinny On Scalability

One important and often misunderstood issue is HPC scalability. There is a general misconception that adding more servers to any HPC problem automatically increases performance. In HPC, scalability is loosely defined by a question, "As I add processors (cores) how much faster will my program run?" A highly scalable program can use many cores, while a less scalable program will show no speed-up as more cores are added. Thus, scalability is function of the program and is well described by Amdahl's Law. There are, however, machine aspects that can contribute to scalability via Amdahl's law. Simply put, the more "things" have to wait for data the worse the scalability. (For those that are familiar with Amdahl's law, this amounts to increasing the sequential portion of the program.)

In HPC the goal is to speed-up applications by keeping your resources as busy as possible. If resources are waiting, then you are not getting the best utilization possible and adding more resources may actually make things worse. As stated, scalability is a function of the program. Thus, there are programs that are highly scalable or "embarrassingly parallel" and there are those that are difficult to scale, which we will call "interconnect sensitive."

An HPC cluster can be built using many different types of hardware. In general, the better the connection between cores (the interconnect between server nodes) the better interconnect sensitive programs will run. If a highly scalable program (e.g. image rendering) is run on a cluster with Gigabit Ethernet and then on a cluster with InfiniBand, the scalability and performance would be almost the same (all other things being equal). If however, an interconnect sensitive program (e.g. weather modeling) were run on the same two clusters, the scalability on the Gigabit Ethernet cluster would be much less than that of the InfiniBand cluster and the performance on the InfiniBand cluster would be much better.

Because the underlying hardware is important to scalability for some programs, maximizing certain aspects can dramatically help improve application performance. Indeed, InfiniBand goes to great lengths to keep the application as close to the "wires" as possible. Most traditional Clouds use either Gigabit or 10-Gigabit Ethernet. HPC instances in these Clouds will absolutely work for embarrassingly parallel programs, but may struggle with those that require a better interconnect. That is, scalability and hence performance will suffer. A true HPC Cloud needs to offer a high performance interconnect that will not limit scalability of some applications.

In addition to a high performance interconnect, an HPC Cloud needs to keep the user as close as possible to the hardware. This requirement runs counter to the virtualization layer that is used on all standard Clouds. Virtualization provides great flexibility to the users, but is designed to keep them from touching the real hardware. As implemented this requirement may limit the flexibility of a true HPC Cloud. There is, however, a way to provide high performance and Cloud flexibility to HPC applications.