A hard to swallow conclusion from the table of cluster

A confession is in order. The last two (1, 2) installments of this column have been a sales pitch of sorts. If you believe some of the things I talked about, large clusters will break, applications will need to tolerate failure and be easy to write, then you may agree that dynamic programming algorithms are one method to solve these problem. The next question is, how do implement these ideas?

The answer is the part many may find distasteful. If you are one of the brave few who take pause to think about how we are going achieve pervasive cluster (parallel) computing, then take a bite of this column. The rest of you weenies should at least nibble at the edges.

Think About It

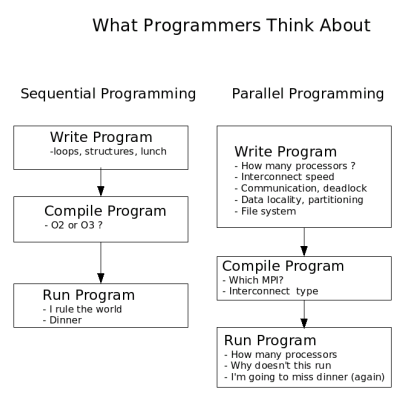

As means to summarize the current state of parallel programming, I have created Figure One. It basically summarizes what programmers tend to think about at various stages of writing, compiling and running programs. Certainly other things can be added, but you get the idea.It should be noted that compilers for sequential applications do a fair amount of optimization, scheduling, and communication for a single processor. In a parallel environment, these issues are often too difficult for the compiler to handle and thus this work is shifted to the user. Indeed, parallel programming is hard because the programmer is responsible for managing many more interconnected parameters than their sequential programmer counterparts.

If parallel multi-core clusters are going to become mainstream, we need to push many of these responsibilities back into the compiler somehow. In addition, as I outlined previously, we need applications to tolerate failure because the statistics of hardware failure will rule the day on large clusters.

The goal, or my wish list as it where, is therefore a programming method that lets me create programs that are fault tolerant, dynamically scalable (from one to thousands of processors), and co-operative with no central point of control. And, most importantly keeps me focused on the problem and not the architecture.

Moving in the Right Direction

The "making parallel easier" challenge is by no means new and has been taken up by many people. At one end of the spectrum are the "message passing library" solutions. These libraries include things like MPI and PVM. The main advantage of this approach is that it is relatively easy to implement and can be added as an extension to existing standard languages. The extension feature is very important. For historical reasons, much of the HPC code base is written in Fortran. Extending these codes to work in parallel by adding library calls instead of re-writing the entire program makes for a good economic argument. When you consider that many of the early and still useful HPC codes may contain over a million lines of functioning code, you gain a new understanding for these types of arguments.A similar approach using threaded programming is accomplished by the OpenMP standard. OpenMP defines a set of directives that tell the compiler where to automatically add parallel OpenMP libraries at compile time. This method can be effective in preserving existing codes as the directives exist as comments and do not change sequential program behavior.

Extensions to the Fortran standard, F90, F95, HPF have included features to aid in parallel optimization by compilers. However, these improvements still rely on the programmer having some knowledge of how well various parts of a program will map to a particular parallel environment.

Originally developed for shared memory computers by David Gelernter and Nicolas Carriero at Yale University, the Linda extensions have seen some success in the HPC world. (Most notable is the use by the Gaussian software package.) Linda can extend almost any programming language by introducing a shared "tuplespace". Using just four additional commands, the programmer dumps work into the tuplespace where other parts of the program, running on the same or another processor, can retrieve, calculate and return the the object to tuple space where it is then retrieved. This approach has many advantages, it is dynamic, it is scalable, and it is very clean. The biggest drawback for clusters is the use of a central shared tuple space.

Unified Parallel C (UPC) is an extension of the C programming language covered in Linux Magazine (March 2006). Parallelism is expressed using an explicitly parallel execution model, a shared address space, synchronization primitives, and a memory consistency model, and memory management primitives. UPC is relatively new and not used widely. It can run on Clusters, but the words explicitly parallel are not what I want as part of my idealized programming experience.

At the other end of the language spectrum is a start from scratch approach called ZPL (short for Z-level Programming Language). ZPL is an array programming language designed to replace common programming languages used in engineering and scientific applications. The thing I like about ZPL is that it relies on implicit parallelism (parallelism that is implied by your program and not explicitly expressed by you). The design goal for ZPL was to obtain machine-independent high performance. As a result, ZPL programs run fast on both sequential and parallel computers. The clean sheet of paper approach offered by ZPL is interesting, but often distasteful to many programmers because the need to start from scratch. The ZPL team gets extra points, however, because the have created a comic book on how to use ZPL.

Our brief survey of parallel programing languages is not intended to be exhaustive or a complete list of options, but rather some examples of how people have approached the problem of making parallel programming easier. Before we jump off the deep end, there is one more approach that deserves mentioning.

Push a Button

Many have suggested, some have tried, and few have succeeded with automatically converting existing codes to parallel codes. On paper it sounds like a good idea. In reality it is quite another story. There has been some successes with automatic conversion, however, there are two very big problems (among a raft of smaller problems). The first problem is that of pointers. Pointers are great aids for programmers, but when analyzing a code for parallelism, they create difficulties. Automatically breaking a program in to many parts means that careful attention must be given to shared variables. Tracing data dependencies is hard enough in static structures, but with pointers all bets are off. The other large problem is that of the algorithm. Parallel execution is often accomplished by reworking the algorithm. Automatic tools can understand and restructure code blocks, but they cannot understand and restructure algorithms. There is more of an interesting story here, but that will have to wait for another column.

I Do Declare

This is the part of the column where we need to call up those few remaining neurons who have stubbornly remained outside the box of conventional wisdom. To achieve my goal of moving the programming experience closer to problem and further away from the computer we will need to lift the programmer to a higher level of abstraction. That is, the programmer must not be allowed to think about how the program will be run on the computer. Just like Fortran lifted the original HPC programmer above the minutia of assembly language programming and closer to the problem they wanted to program. (recall that Fortran was short for FORmula TRANslation language).In general, approaches to programming can be broken into two main groups, Imperative and Declarative. Imperative programming is what most people have done in the past. Fortran, C, Basic, Java, Perl, Python, all fall into the imperative camp where the computation retains knowledge of the system state. In an imperative program, you are telling the computer "how to solve" your problem. For instance, you assign data to a variable and continue until you reach a limit. The programmer explicitly tells the computer how to solve the problem. Program flow is assumed to be from one line to the next.

Declarative programming, on the other hand, allows the program to say what is to be done, but does not say how. The declarative compiler/interpreter uses implied knowledge from the program to figure out how to solve the problem. The program assumes nothing about the system state when writing the program. Instead of moving from one line to the next, declarative programs often resolve or satisfy the current line of the program, by using other lines of the program. This distinction allows the underlying language to determine "how" to resolve the current line.

In a way, declarative programming insulates the programmer from the internals of the machine (the "how" to solve it part). For all the declarative programmer knows, his computer could be little monkeys and typewriters. The insulation has tremendous advantages. It lets the compiler figure out the best way to optimize for a given computing environment. The ZPL language is great example of this method because the programmer has not expressed "how" to solve a matrix operation, but described what they want to do, the compiler can optimize for a particular environment. If they had explicitly told the computer how to do the matrix operation, the compiler is constrained to work within the context of implied (implicit) program flow.

| Sidebar: The Tale of Patent Number 5471622 |

|

As you may guessed, I have spent a fair amount of time thinking about these ideas. Actually over ten years ago, I thought so hard about it I manages go get a patent entitled: "Run-time system having nodes for identifying parallel tasks in a logic program and searching for available nodes to execute the parallel tasks." Basically the patent outlined a dynamic method of running a Prolog programs on directly connected processors (For those that remember the Inmos Transputer.) The method is applicable to clusters and in the my last column (April 2006) I outlined the method of the patent with some obvious (and not so obvious) refinements. Contrary to the patent system of today, the process of obtaining the patent spanned six years (1989-1995) and required that I argue and demonstrate the "non obvious nature" of the invention. The original patent has been abandoned due to my belief that the current patent system in the US is quite broken and does very little to protect unique inventions because anything is patentable in this point in time. Sharing these ideas and creating "prior art" now seems the best way to ensure that they will not get stolen by yet another obvious patent. For those interested in reading the patent, a small amount of googling will reward you with enough lawyer speak to last a year or two.

|

Declarative approaches sound nice. In fact they are very elegant. Some examples you may have encountered before are are SQL, Lisp, Scheme, Prolog, Haskell, and others. Declarative applications can often be written in less lines of code than their imperative counterparts as you are not expending resources to control the machine. If you use SQL you will notice that from the programmers point of view, you do not tell the computer how to resolve queries, which are often done in parallel. Less lines of code results in less bugs and better productivity. The problem with declarative approaches is often efficiency. The abstraction layer has a cost. To be fair, some declarative approaches often have minimal imperative features to aid in programming (we are not going to venture down that path today).

The Explicit Implications

Now that I have breezed through enough computer since topics to make our head spin, lets try and tie this up in neat little package.My experience has shown that parallel computing includes a large cost for application development. In addition, as clusters get bigger, the traditional way we create and run applications may not work well in "fault expected" environments. Existing applications are probably not going to be re-written any time soon, so my remaining comments have to do with those killer applications that have yet to be written.

In order to solve the problems I have outlined, at a reasonable cost, I propose that a declarative approach to parallel computing is one of the promising avenues in which to proceed. As distasteful as it sounds, I see no other way out of our current conundrum of hard to program cantankerous beasts we call clusters. If programmers can create and test ideas quickly on any computer (one node or ten thousand nodes) and not worry about how the program gets executed, then clusters will become more useful to everyone.

There is no doubt that declarative approaches will hurt efficiency and lead to many dismissing this approach to parallel computing. But, let's take a look at the economics for a minute. The cost of computing power is going down. Dual cores now cost as much as single cores did last year. The effective computing power has doubled in the last year. This trend will continue. Now consider what costs will increase. The cost of people will always rise hence the cost to create software will as well. Where should the effort to enhance efficiency be focused?

Two columns ago I started with my cluster wish list. My solution to this list looks like a sandwich with three distinct parts many of which do not exist just yet. We start off with a layer of inexpensive and massively parallel hardware, slap on a layer of dynamic execution methods, and top it off with a declarative programming interface. Goes great with side of Linux. Now get in the kitchen and get to work.

Note: This article is the last of three part series. The first and second parts are:

- Part One: Cluster Programming: You Can't Always Get What You Want

- Part Two: Cluster Programming: The Ignorance is Bliss Approach

| Sidebar: Resources |

|

OpenMP

HPF - High Performance Fortran ZPL - parallel matrix language

|

This article was originally published in Linux Magazine. It has been updated and formatted for the web. If you want to read more about HPC clusters and Linux you may wish to visit Linux Magazine.

Douglas Eadline can be found swinging randomly through the trees at here at ClusterMonkey