A hard to swallow conclusion from the table of cluster

A confession is in order. The last two (1, 2) installments of this column have been a sales pitch of sorts. If you believe some of the things I talked about, large clusters will break, applications will need to tolerate failure and be easy to write, then you may agree that dynamic programming algorithms are one method to solve these problem. The next question is, how do implement these ideas?

The answer is the part many may find distasteful. If you are one of the brave few who take pause to think about how we are going achieve pervasive cluster (parallel) computing, then take a bite of this column. The rest of you weenies should at least nibble at the edges.

Think About It

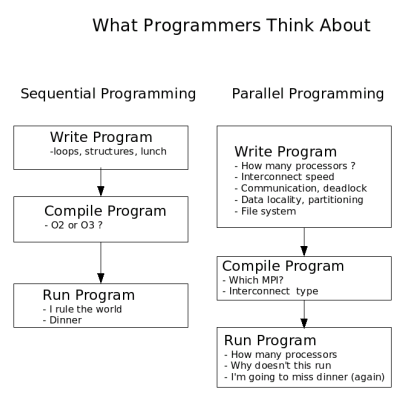

As means to summarize the current state of parallel programming, I have created Figure One. It basically summarizes what programmers tend to think about at various stages of writing, compiling and running programs. Certainly other things can be added, but you get the idea.It should be noted that compilers for sequential applications do a fair amount of optimization, scheduling, and communication for a single processor. In a parallel environment, these issues are often too difficult for the compiler to handle and thus this work is shifted to the user. Indeed, parallel programming is hard because the programmer is responsible for managing many more interconnected parameters than their sequential programmer counterparts.

If parallel multi-core clusters are going to become mainstream, we need to push many of these responsibilities back into the compiler somehow. In addition, as I outlined previously, we need applications to tolerate failure because the statistics of hardware failure will rule the day on large clusters.

The goal, or my wish list as it where, is therefore a programming method that lets me create programs that are fault tolerant, dynamically scalable (from one to thousands of processors), and co-operative with no central point of control. And, most importantly keeps me focused on the problem and not the architecture.

Moving in the Right Direction

The "making parallel easier" challenge is by no means new and has been taken up by many people. At one end of the spectrum are the "message passing library" solutions. These libraries include things like MPI and PVM. The main advantage of this approach is that it is relatively easy to implement and can be added as an extension to existing standard languages. The extension feature is very important. For historical reasons, much of the HPC code base is written in Fortran. Extending these codes to work in parallel by adding library calls instead of re-writing the entire program makes for a good economic argument. When you consider that many of the early and still useful HPC codes may contain over a million lines of functioning code, you gain a new understanding for these types of arguments.A similar approach using threaded programming is accomplished by the OpenMP standard. OpenMP defines a set of directives that tell the compiler where to automatically add parallel OpenMP libraries at compile time. This method can be effective in preserving existing codes as the directives exist as comments and do not change sequential program behavior.

Extensions to the Fortran standard, F90, F95, HPF have included features to aid in parallel optimization by compilers. However, these improvements still rely on the programmer having some knowledge of how well various parts of a program will map to a particular parallel environment.

Originally developed for shared memory computers by David Gelernter and Nicolas Carriero at Yale University, the Linda extensions have seen some success in the HPC world. (Most notable is the use by the Gaussian software package.) Linda can extend almost any programming language by introducing a shared "tuplespace". Using just four additional commands, the programmer dumps work into the tuplespace where other parts of the program, running on the same or another processor, can retrieve, calculate and return the the object to tuple space where it is then retrieved. This approach has many advantages, it is dynamic, it is scalable, and it is very clean. The biggest drawback for clusters is the use of a central shared tuple space.

Unified Parallel C (UPC) is an extension of the C programming language covered in Linux Magazine (March 2006). Parallelism is expressed using an explicitly parallel execution model, a shared address space, synchronization primitives, and a memory consistency model, and memory management primitives. UPC is relatively new and not used widely. It can run on Clusters, but the words explicitly parallel are not what I want as part of my idealized programming experience.

At the other end of the language spectrum is a start from scratch approach called ZPL (short for Z-level Programming Language). ZPL is an array programming language designed to replace common programming languages used in engineering and scientific applications. The thing I like about ZPL is that it relies on implicit parallelism (parallelism that is implied by your program and not explicitly expressed by you). The design goal for ZPL was to obtain machine-independent high performance. As a result, ZPL programs run fast on both sequential and parallel computers. The clean sheet of paper approach offered by ZPL is interesting, but often distasteful to many programmers because the need to start from scratch. The ZPL team gets extra points, however, because the have created a comic book on how to use ZPL.

Our brief survey of parallel programing languages is not intended to be exhaustive or a complete list of options, but rather some examples of how people have approached the problem of making parallel programming easier. Before we jump off the deep end, there is one more approach that deserves mentioning.

Push a Button

Many have suggested, some have tried, and few have succeeded with automatically converting existing codes to parallel codes. On paper it sounds like a good idea. In reality it is quite another story. There has been some successes with automatic conversion, however, there are two very big problems (among a raft of smaller problems). The first problem is that of pointers. Pointers are great aids for programmers, but when analyzing a code for parallelism, they create difficulties. Automatically breaking a program in to many parts means that careful attention must be given to shared variables. Tracing data dependencies is hard enough in static structures, but with pointers all bets are off. The other large problem is that of the algorithm. Parallel execution is often accomplished by reworking the algorithm. Automatic tools can understand and restructure code blocks, but they cannot understand and restructure algorithms. There is more of an interesting story here, but that will have to wait for another column.